Multi-Agent AI Systems: Why One Agent Isn't Enough

Single AI agents hit a ceiling fast. Multi-agent systems — where specialized agents collaborate, hand off tasks, and escalate to humans — are how enterprises actually scale AI operations. Here's why the future belongs to orchestrated agent teams, not solo bots.

Quick Answer: Single AI agents fail at complex enterprise workflows because they can't specialize, scale, or recover from errors gracefully. Multi-agent systems assign specialized agents to specific tasks — one for document processing, one for carrier communication, one for ERP updates — with an orchestrator agent coordinating handoffs and escalating to humans when confidence is low. This architecture handles the full complexity of enterprise operations.

The Single-Agent Illusion

Here's a scenario every operations leader knows too well.

You buy an AI agent. It handles customer support tickets. At first, it's impressive — resolving password resets, answering FAQs, pulling up order statuses in seconds. Your team celebrates. Leadership asks for an ROI report. The vendor sends a case study request.

Then month three arrives.

A customer submits a freight claim that involves a damaged shipment, a disputed invoice across two carriers, and a compliance deadline that expires in 48 hours. Your AI agent — the one that crushed those password resets — stares at this ticket like a golden retriever confronted with a calculus textbook. It generates a polite, confident, and completely wrong response. The customer escalates. A human agent spends 45 minutes cleaning up the mess, then resolves the actual issue in another 30.

This is the single-agent ceiling. And in 2026, it's the most expensive problem in enterprise AI that nobody wants to talk about.

Gartner projects that 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025. That's an 8x increase in a single year. But here's the statistic that should keep you up at night: more than 40% of those agent projects will fail by 2027, according to the same analysts. The reason isn't that AI doesn't work. It's that most organizations are deploying one generalist agent and expecting it to do everything.

The enterprises that will win aren't building better single agents. They're building teams of agents.

What Multi-Agent Systems Actually Look Like

Let's kill the abstraction and get concrete.

A multi-agent system is exactly what it sounds like: multiple AI agents, each with a specialized role, working together to handle complex workflows. Think of it less like a single employee and more like a department — with a manager, specialists, and clear escalation paths.

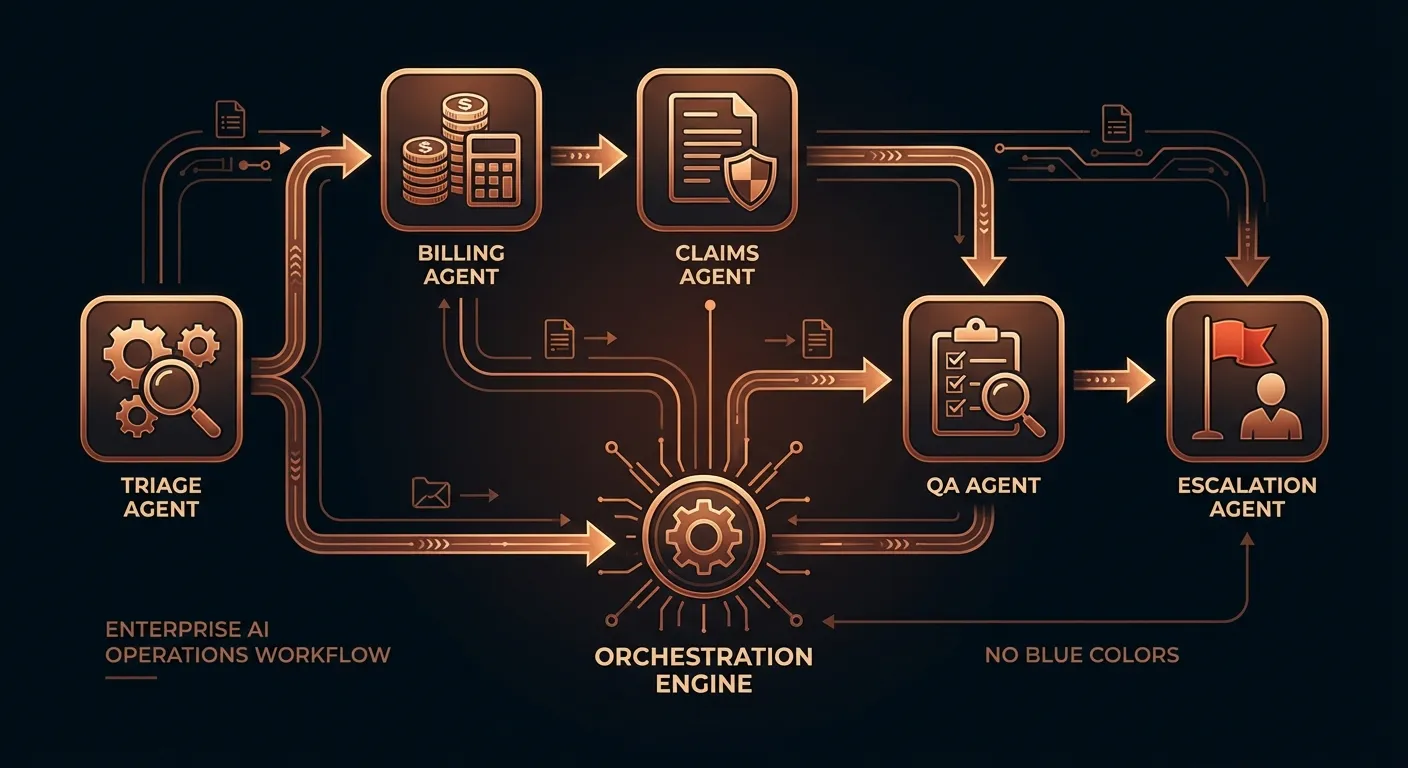

In a typical enterprise operations setup, a multi-agent architecture might include:

- A triage agent that reads incoming requests, classifies them by type, urgency, and complexity, and routes them to the right specialist

- Domain-specific agents — one that handles billing inquiries, another that processes claims, another that manages carrier communications, another that navigates compliance requirements

- A quality assurance agent that reviews outgoing responses before they reach the customer, checking for accuracy, tone, and policy compliance

- An escalation agent that recognizes when a situation has exceeded AI capabilities and routes it to a human operator with full context — not a cold handoff, but a warm transfer with every relevant detail pre-loaded

- A learning agent that monitors outcomes, tracks which responses led to resolutions versus re-opens, and feeds those patterns back into the system

This isn't science fiction. AWS published reference architectures for multi-agent orchestration on Amazon Bedrock in early 2026. Talkdesk has built multi-agent orchestration into their customer experience platform. Google's Agent-to-Agent (A2A) protocol — released in 2025 — provides a standardized way for agents from different vendors to communicate and collaborate.

The infrastructure exists. The question is whether your organization is still trying to solve a team problem with a single player.

Why Single Agents Fail at Enterprise Scale

To understand why multi-agent systems matter, you need to understand why single agents break down. It's not a mystery — it's basic systems design.

The Context Window Problem

Every AI agent operates within a context window — the amount of information it can hold in working memory at any given moment. Even the largest models available in 2026 have limits. When you ask a single agent to simultaneously understand your SOP documentation, the customer's history, the current ticket details, relevant compliance requirements, carrier-specific processes, and internal escalation policies, you're asking it to juggle more context than it can reliably handle.

The result? It drops things. Important things. A billing agent that forgets the customer mentioned a disputed charge three messages ago. A claims agent that doesn't realize the shipment crossed international borders and requires customs documentation. A support agent that applies the wrong SOP because it confused two similar but distinct processes.

Multi-agent systems solve this by distributing context. Each agent holds only the information relevant to its specific role. The triage agent doesn't need to know the intricacies of customs law — it just needs to recognize that the ticket involves international shipping and route it to the agent that does. This specialization means each agent operates well within its context limits, dramatically reducing errors.

The Skill Ceiling Problem

Training a single agent to be excellent at everything is like training a single employee to be simultaneously a world-class accountant, lawyer, customer service representative, logistics coordinator, and compliance officer. You might get someone who's mediocre at all five. You'll never get someone who's exceptional at any of them.

AI agents are no different. When you fine-tune or prompt-engineer a single agent for billing, its performance on claims handling degrades. When you optimize it for empathetic customer communication, its technical accuracy on compliance questions suffers. This isn't a bug — it's a fundamental tradeoff in how language models work.

McKinsey's 2025 State of AI report revealed that less than 10% of organizations have successfully scaled AI agents in any individual function. The primary reason? They're trying to scale one agent across multiple functions instead of deploying specialized agents for each function.

Multi-agent systems sidestep this entirely. Each agent is optimized for its specific domain. The billing agent is ruthlessly accurate on invoice calculations. The claims agent knows every carrier's dispute process. The compliance agent has memorized every relevant regulation. And because they're separate systems, improving one doesn't degrade another.

The Failure Cascade Problem

When a single agent makes a mistake, there's no safety net. The error reaches the customer, the downstream system, or the compliance record without any intermediate check. In enterprise operations, a single incorrect response can trigger a cascade: a wrongly denied claim leads to a regulatory complaint, which triggers an audit, which reveals a pattern of similar errors, which results in a fine that costs more than the entire AI implementation saved.

Multi-agent systems introduce natural checkpoints. The quality assurance agent catches errors before they ship. The escalation agent recognizes uncertainty and routes to humans before damage is done. The learning agent spots patterns of failure and flags them for system improvements. It's defense in depth — the same principle that makes modern cybersecurity work.

The Orchestration Layer: Where the Magic Happens

Having multiple agents isn't enough. You need orchestration — the layer that decides which agent handles what, manages handoffs between agents, maintains shared context across the team, and ensures the entire system behaves coherently.

Think of orchestration as the operations manager of your AI team. Without it, you have a group of specialists shouting into the void. With it, you have a coordinated workflow that's greater than the sum of its parts.

KPMG's Q4 2025 AI Pulse survey captured this shift perfectly. While initial agent deployments grew from 11% in Q1 to 26% by Q4, the real story was what leading enterprises were doing behind the scenes: professionalizing and preparing to scale agent systems — readying data, investing in infrastructure, and building governance and observability to run multi-agent systems reliably.

Swami Chandrasekaran, KPMG's Global Head of AI and Data Labs, put it bluntly: "Value doesn't come from launching isolated agents. 2026 will be the year we begin to see orchestrated super-agent ecosystems, governed end-to-end by robust control systems that drive measurable outcomes and continuous improvement."

Effective orchestration includes several critical capabilities:

Intelligent routing. Not just keyword matching — understanding the intent, complexity, and urgency of each request and routing it to the agent best equipped to handle it. A freight claim involving a single domestic shipment with clear documentation routes differently than a multi-carrier international claim with missing paperwork and an approaching deadline.

Context passing. When one agent hands off to another, the receiving agent needs the full picture — not just the raw ticket, but what's been tried, what failed, what the customer's emotional state is, and what constraints apply. Poor handoffs are the number one complaint customers have about both human and AI support systems. Multi-agent orchestration solves this by maintaining a shared state that any agent in the workflow can access.

Escalation intelligence. The system needs to know not just when to escalate to a human, but which human. A billing dispute that's approaching legal territory should route to someone with legal authority, not a tier-1 support representative. A VIP customer with a complex issue should route to a senior agent who has authority to offer concessions. Smart escalation means human operators spend their time on problems that genuinely require human judgment, not cleaning up AI mistakes.

Observability and governance. Every decision, every handoff, every response needs to be logged, traceable, and auditable. In regulated industries — financial services, healthcare, logistics — this isn't optional. KPMG's survey found that 65% of enterprise leaders cite agentic system complexity as the top barrier to scaling AI, and the cure is governance infrastructure that provides visibility into how the entire multi-agent system behaves.

The Human-in-the-Loop Imperative

Here's where the multi-agent conversation gets really interesting — and where most vendors get it wrong.

The goal of a multi-agent system isn't to remove humans from the loop. It's to put humans in the right part of the loop.

In a well-designed multi-agent system, humans serve three critical roles:

1. The Expert Escalation Point. When the AI team encounters something genuinely novel — a situation not covered by existing SOPs, a customer with a unique circumstance, a regulatory gray area — it escalates to a human expert. But unlike traditional escalation, the human receives a complete briefing: what the agents tried, what they found, what they think the answer might be, and why they're uncertain. The human doesn't start from scratch. They start from 80% done.

2. The Quality Calibrator. Human operators review a sample of AI-handled interactions, providing feedback that improves agent performance over time. This isn't just error correction — it's the mechanism by which the system learns organizational norms, communication styles, and judgment calls that can't be codified into rules. When a human says "this response was technically correct but too cold for a customer who just lost a $50,000 shipment," that feedback ripples through the system and makes every future interaction better.

3. The Override Authority. When an AI agent is about to take an action with significant consequences — approving a large claim, escalating to a regulatory body, offering a substantial concession — a human can review and approve before it executes. This isn't a bottleneck. It's a guardrail. And in enterprise operations, guardrails are what let you deploy AI with confidence instead of crossing your fingers and hoping.

This human-in-the-loop architecture is what separates enterprise-grade multi-agent systems from the chatbot-with-a-new-name products flooding the market. It's also what makes the difference between the 10% of organizations that successfully scale AI agents and the 90% that don't.

Real-World Multi-Agent Patterns in Enterprise Operations

Let's walk through three concrete scenarios where multi-agent systems dramatically outperform single-agent approaches.

Scenario 1: Complex Freight Claim Resolution

A shipper submits a claim for $47,000 in damaged goods across three separate shipments involving two carriers.

Single-agent approach: The agent attempts to process all three claims simultaneously. It pulls documentation for the wrong shipment on carrier B's claim. It applies the wrong liability threshold because it confused domestic and international shipping rules. It generates a response that's 60% correct but contains a critical error on the highest-value claim. A human agent spends 90 minutes untangling the mess.

Multi-agent approach:

- The triage agent identifies this as a multi-shipment, multi-carrier claim and splits it into three sub-tasks

- Each sub-task routes to the claims specialist agent for the relevant carrier, which knows that carrier's specific dispute process, documentation requirements, and liability thresholds

- The compliance agent reviews each sub-claim against applicable regulations and flags that one shipment crossed international borders, requiring additional documentation

- The QA agent reviews the consolidated response, catches an inconsistency in damage valuation methodology, and sends it back for correction

- Because the total claim exceeds $25,000, the escalation agent routes the final package to a senior human claims adjuster with a complete briefing — all documentation organized, all carrier-specific requirements met, recommended resolution outlined with confidence scores

- The human adjuster reviews for 15 minutes, approves with one minor modification, and the claim is processed

Total time: 20 minutes with human involvement. Accuracy: 99%. Customer experience: seamless.

Scenario 2: SLA-Critical Support Escalation

An enterprise customer with a $2M annual contract submits a support ticket at 4:47 PM on Friday. Their SLA guarantees a response within 1 hour and resolution within 4 hours.

Single-agent approach: The agent recognizes the SLA tier but doesn't understand the organizational implications. It generates a technically accurate but generic response. The customer, already frustrated, feels like they're talking to a machine. They email their account executive directly. Monday morning starts with a fire drill.

Multi-agent approach:

- The triage agent identifies the customer's tier, the SLA clock, and the time-sensitivity (Friday afternoon, limited weekend staffing)

- It routes simultaneously to the technical specialist agent for the product area and the relationship management agent that has context on this customer's history and communication preferences

- The technical agent diagnoses the issue and prepares a resolution path

- The relationship agent drafts a personalized response that acknowledges the customer's importance, references their specific environment, and sets accurate expectations about the resolution timeline

- The QA agent merges both outputs into a cohesive response

- The escalation agent proactively alerts the on-call senior engineer and the account executive that a high-value customer has an SLA-critical issue

- Response goes out in 12 minutes. Resolution is completed in 2.5 hours. The customer's account executive follows up Monday morning — not to fight a fire, but to reinforce the relationship

Scenario 3: Cross-Platform Workflow Automation

A customer reports an issue that spans Salesforce (CRM record), Jira (engineering ticket), and Zendesk (support history). They've been bounced between three departments over two weeks.

Single-agent approach: The agent can only access one system. It sees part of the picture. It gives a partial answer. The customer has to re-explain their entire history for the fourth time.

Multi-agent approach:

- The triage agent recognizes this is a cross-platform issue and activates agents for each system

- The Salesforce agent pulls the CRM record, account history, and contract details

- The Jira agent retrieves the engineering ticket status, developer notes, and estimated fix timeline

- The Zendesk agent compiles the full support interaction history across all previous contacts

- The synthesis agent merges all three data sources into a unified picture

- The customer receives a single, comprehensive response that demonstrates the company finally understands their complete situation — across every system, every department, every previous interaction

This is the experience that turns a frustrated detractor into a loyal advocate. And it's only possible with multi-agent orchestration.

The Build vs. Buy Decision

If you're convinced that multi-agent systems are the future — and the data strongly suggests they are — the next question is whether to build or buy.

Building in-house gives you maximum control and customization. But the complexity is staggering. You need to design the orchestration layer, build and maintain each specialized agent, create the routing logic, implement the handoff protocols, build the observability infrastructure, and keep everything running reliably at scale. KPMG found that 65% of leaders identify this complexity as their top barrier. Building from scratch means hiring specialized AI engineers, investing months (or years) in development, and maintaining the system indefinitely.

Buying a platform gets you to production faster — if you choose the right one. But most platforms on the market in 2026 are single-agent systems wearing a multi-agent costume. They might have multiple "skills" or "modes," but underneath, it's one model trying to do everything. The telltale sign: they require months of training data and thousands of historical tickets before they can even start.

The right approach for most enterprises is a platform that provides multi-agent orchestration out of the box while allowing customization through your own SOPs, policies, and feedback. You shouldn't need to be an AI engineer to deploy a multi-agent system. You should be able to feed in your documentation, define your workflows, and let the platform handle the orchestration — while keeping your team in the loop for quality assurance, escalation, and continuous improvement.

What to Look for in a Multi-Agent Platform

Not all multi-agent systems are created equal. When evaluating platforms, here's what separates the real thing from marketing veneer:

SOP-driven configuration. The system should learn from your existing documentation — SOPs, policies, knowledge bases — not require thousands of historical tickets for training. If a vendor tells you they need 20,000 resolved tickets before they can help, they're building a single statistical model, not a multi-agent system.

Transparent agent roles. You should be able to see which agent handled which part of a workflow, what decisions it made, and why. Black-box systems that just produce an output without explaining how they got there are unacceptable in regulated industries and risky in any enterprise.

Human-in-the-loop by design. Escalation shouldn't be an afterthought or an error state. The best multi-agent systems treat human involvement as a feature — a deliberate part of the workflow that makes the entire system smarter over time.

Cross-platform integration. Your operations don't live in a single tool. Your AI shouldn't either. The platform should connect natively to your CRM, ticketing system, project management tools, communication channels, and internal databases.

Continuous learning. The system should get better every day based on outcomes, human feedback, and changing conditions. Static agents that perform the same in month twelve as month one aren't worth the investment.

Fast time-to-value. If deployment takes six months, you're paying for the vendor's engineering debt, not your own operational improvement. A modern multi-agent platform should be operational in days, not quarters.

The Future Is Orchestrated

The agentic AI market is projected to grow from $7.8 billion to over $52 billion by 2030. That growth won't come from building better chatbots. It will come from building orchestrated systems where specialized agents work together, learn from each other, and collaborate with human experts to handle the full complexity of enterprise operations.

The enterprises that move to multi-agent architectures in 2026 won't just improve their support metrics. They'll fundamentally change their cost structure, their speed of response, their ability to scale without proportional headcount growth, and their resilience against the operational complexity that's only increasing.

The single-agent era was a proof of concept. The multi-agent era is the product.

The only question is whether you'll lead the transition or scramble to catch up.

Further Reading

- Why Cross-Platform Is the Only Path Forward for AI Agents

- The VP's Guide to AI in Operations

- From Pilot to Production: Why 2026 Is the Year AI Agents Go Live

Ready to Move Beyond Single-Agent Limitations?

CorePiper's multi-agent platform deploys specialized AI agents that work from your SOPs, collaborate across your existing tools (Salesforce, Jira, Zendesk, and more), and keep your team in the loop for the decisions that matter. No months-long training period. No thousands of historical tickets required. Just your documentation, your workflows, and AI agents that start delivering value on day one.

Book a demo → and see how orchestrated AI agents handle your real workflows — not a canned demo, but your actual operations.