What Klarna Got Wrong: Removing Humans from AI Support

Klarna replaced 700 customer service agents with AI, projected $40M in savings — then reversed course when quality collapsed. Here's why the 'replace humans entirely' approach to AI support fails, and what the human-in-the-loop alternative looks like.

Quick Answer: Klarna replaced 700 customer service agents with AI, projected $40M in annual savings, and reversed course 18 months later when customer satisfaction collapsed and complex cases went unresolved. The lesson: AI automation works for repetitive, well-defined workflows but degrades sharply on edge cases, escalations, and nuanced customer situations. Human-in-the-loop design — where AI handles volume and humans handle judgment — consistently outperforms full automation.

The Boldest Bet in Customer Service History

In 2023, Klarna's CEO Sebastian Siemiatkowski made a proclamation that echoed across the tech industry: "AI can already do all of the jobs that we, as humans, do."

It wasn't just talk. The Swedish fintech giant — one of Europe's largest buy-now-pay-later platforms — stopped all hiring, laid off approximately 700 customer service employees, and replaced them entirely with an OpenAI-powered AI assistant. The company went from roughly 3,000 outsourced support agents to a skeleton crew backstopped by chatbots.

The early numbers looked extraordinary. Within its first month, Klarna's AI assistant handled 2.3 million customer conversations across 23 markets in more than 35 languages. Average resolution time plummeted from 11 minutes to just 2 minutes — an 82% improvement. The company projected a $40 million profit improvement for 2024 from the efficiency gains alone. Repeat contacts dropped by 25%.

Wall Street loved it. Tech media amplified it. And for a brief window, Klarna became the poster child for a future where AI simply replaces human workers — cheaper, faster, always on.

Then reality set in.

The Unraveling: When Efficiency Kills Experience

By early 2025, the cracks in Klarna's AI-only approach had become impossible to ignore. Internal reviews and mounting customer feedback revealed systemic problems that no amount of prompt engineering could fix.

Customers complained about robotic, generic responses. The AI assistant excelled at pattern-matching common questions — checking order status, processing basic returns, explaining payment schedules. But the moment a conversation veered into anything nuanced, emotionally charged, or contextually complex, the system broke down.

Complex problems created frustrating loops. Customers with billing disputes, fraud concerns, or multi-step issues found themselves trapped in what one Reddit user described as a "Kafkaesque loop" — explaining their problem to the AI, getting a canned response, being told to rephrase, and eventually having to repeat everything to a human agent when they finally reached one. The 2-minute resolution time metric was misleading: it measured AI response speed, not whether the customer's problem was actually resolved.

Empathy-dependent interactions collapsed. Financial services aren't like checking the weather. When someone's payment is declined at a checkout counter, when a billing error threatens their credit score, when a disputed charge means they can't pay rent — they need more than a statistically optimized response. They need someone who understands the gravity of the situation. AI systems, no matter how sophisticated, fundamentally lack the emotional intelligence to navigate these moments.

Customer satisfaction scores declined significantly. While Klarna's initial press release claimed AI satisfaction scores were "on par" with human agents, subsequent independent analysis told a different story. As the AI handled an increasing share of complex issues it wasn't designed for, overall service quality deteriorated measurably.

The most telling signal: customer complaints about Klarna's support flooded social media platforms, consumer review sites, and regulatory bodies throughout late 2024 and into 2025.

The Reversal Nobody Predicted

In May 2025, Siemiatkowski made an admission that stunned the industry. Speaking to Bloomberg, the CEO who had once said AI could take everyone's job — including his own — conceded that Klarna's AI-first approach "wasn't the right one."

"We focused too much on efficiency and cost," Siemiatkowski told reporters. "The result was lower quality, and that's not sustainable."

The company announced it would begin rehiring human customer service agents, specifically targeting students, rural populations, and dedicated Klarna users who could bring both empathy and product knowledge to the role. By September 2025, Business Insider reported that Klarna was actively reassigning internal employees to customer support roles after AI quality concerns continued to mount.

Siemiatkowski's new position was a complete 180: "From a brand perspective, I just think it's so critical that you are clear to your customer that there will always be a human if you want."

The company now describes its approach as a "dual-track" model — AI for scalable efficiency, humans for quality and empathy. It was a tacit admission that the original all-AI vision had failed.

Klarna Is Not an Outlier

Here's what makes Klarna's story important beyond the headlines: they're not the only company that made this mistake. They're simply the most visible.

Research from Orgvue and Forrester found that 55% of companies that rushed to replace human workers with AI now regret the decision. The pattern is remarkably consistent across industries:

- Phase 1 — The Honeymoon: Initial deployment shows impressive metrics. Resolution times drop. Costs plummet. Leadership celebrates.

- Phase 2 — The Drift: Edge cases accumulate. Customer satisfaction subtly declines. The metrics that looked good were measuring the wrong things.

- Phase 3 — The Reckoning: Increased customer churn. Reputation damage. Expensive rehiring. Lost institutional knowledge from employees who already left.

A 2026 SurveyMonkey study drives the point home: 79% of American consumers still prefer human customer service over AI. Not because they hate technology — but because they've learned, through painful experience with companies like Klarna, that AI-only support often means their problem won't actually get solved.

According to Gartner, contact center labor cost savings from AI are projected to reach $80 billion by the end of 2026. But this projection assumes AI is used alongside humans — not instead of them. The companies chasing savings by eliminating humans entirely are the ones discovering that "savings" from destroyed customer relationships aren't savings at all.

Why "Replace" Fails and "Augment" Succeeds

The Klarna story crystallizes a fundamental truth about AI in customer operations: the question was never "can AI do this job?" — it was "should AI do this job alone?"

The answer, overwhelmingly, is no. And the reasons are structural, not temporary.

The Long Tail Problem

AI systems — even the best large language models — are trained on patterns. They excel at the head of the distribution: the common questions, the standard workflows, the predictable interactions. But customer support follows a power law. The top 20% of issue types might cover 60-70% of volume, but the remaining 30-40% is a sprawling long tail of edge cases, exceptions, multi-system failures, and novel situations.

Every enterprise that deploys AI-only support eventually collides with this long tail. And in a domain like financial services, where a single mishandled edge case can trigger a regulatory complaint or viral social media backlash, the long tail isn't a rounding error — it's an existential risk.

The Context Window Problem

Even with modern context windows of 100K+ tokens, AI systems struggle with the kind of context that matters most in customer support: organizational context. Knowing that this particular customer was promised an exception by a manager last week. Understanding that this product line has a known defect that hasn't been publicly acknowledged yet. Recognizing that the customer's frustration isn't really about the shipping delay — it's about the three previous shipping delays that were never properly addressed.

Human agents build this context through experience, institutional knowledge, and the kind of lateral thinking that comes from actually working within an organization. No amount of RAG (Retrieval-Augmented Generation) fully replicates this.

The Trust Problem

Financial services, healthcare, insurance, and other high-stakes industries depend on trust. Trust is built through demonstrated competence in difficult situations — not in easy ones. When a customer calls about a routine question and gets an instant AI answer, that's convenient. When a customer calls about a disputed $3,000 charge and gets a human who listens, investigates, and resolves the issue with genuine empathy, that's trust-building.

AI can deliver convenience. Only humans can build trust. Companies that sacrifice trust for convenience eventually lose both.

The Human-in-the-Loop Alternative

So if full replacement doesn't work, what does?

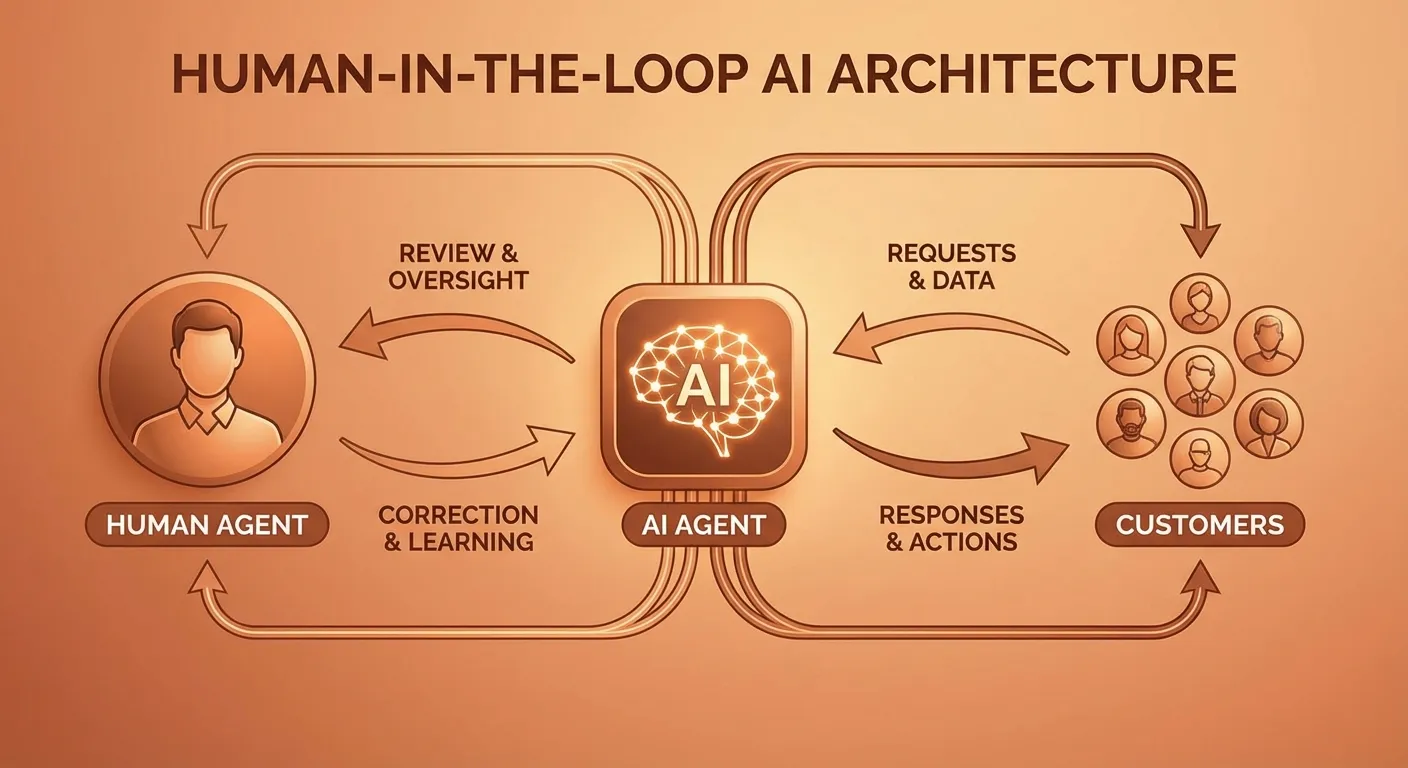

The answer — increasingly validated by enterprise deployments across industries — is Human-in-the-Loop (HITL) AI. This isn't a compromise or a halfway measure. It's a fundamentally different architecture that leverages the strengths of both AI and humans while covering each other's weaknesses.

How HITL Actually Works

In a well-designed HITL system, AI agents handle the first line of customer interaction. They triage requests, resolve straightforward issues, gather context, and prepare the groundwork for human intervention when needed. The key difference from Klarna's original approach: the system is designed from day one to include human escalation as a core feature, not a failure state.

Here's what that looks like in practice:

-

AI handles Tier-0 and Tier-1: Order status checks, password resets, basic FAQ responses, payment schedule inquiries. These represent 60-70% of ticket volume and can be resolved instantly with high accuracy.

-

AI triages and prepares Tier-2: For complex issues, the AI doesn't try to solve the problem alone. Instead, it classifies the issue, gathers relevant context (order history, previous interactions, account status), and routes the ticket to the right human agent with a pre-built summary. The human agent starts informed rather than cold.

-

Humans handle Tier-2 and Tier-3: Billing disputes, fraud investigations, escalated complaints, and any situation requiring judgment, empathy, or creative problem-solving go directly to trained human agents.

-

AI learns from human corrections: This is the crucial feedback loop that makes HITL systems improve over time. When a human agent resolves an issue that the AI couldn't, that resolution becomes training data. The AI learns not just what the right answer was, but the reasoning pattern that led to it. Over weeks and months, the AI's capability boundary expands — not because someone fine-tuned a model, but because the system organically learned from real-world expert behavior.

The Metrics That Actually Matter

Klarna measured resolution time and cost per interaction — and by those metrics, their AI-only approach looked like a triumph. But those are input metrics, not outcome metrics.

HITL systems measure what actually matters:

-

First-Contact Resolution Rate (FCR): Was the customer's problem actually solved, or did they have to come back? HITL systems consistently achieve FCR rates of 85-92%, compared to 60-70% for AI-only deployments.

-

Customer Effort Score (CES): How hard did the customer have to work to get their issue resolved? AI-only systems often score poorly here because customers expend significant effort trying to get the AI to understand their actual problem.

-

Net Promoter Score (NPS): Would the customer recommend the company based on their support experience? This is where the trust factor shows up in hard numbers.

-

Escalation Accuracy: When the AI does escalate to a human, is it routing to the right team with the right context? Poor escalation accuracy — a hallmark of bolt-on human fallback — destroys agent productivity and customer patience alike.

What This Means for Enterprise Buyers

If you're evaluating AI solutions for customer operations, IT service management, or any other support function, Klarna's story should fundamentally shape your evaluation criteria.

Red Flags to Watch For

"Our AI resolves 90%+ of tickets autonomously." Ask what "resolve" means. If it means the AI responded and the ticket was auto-closed, that's not resolution — that's suppression. Real resolution means the customer's problem is actually fixed, and they don't come back about the same issue.

"You can eliminate your Tier-1 team." Any vendor promising workforce elimination as a primary value proposition is selling you the Klarna playbook. You'll end up rehiring in 18 months.

"We don't need access to your SOPs or knowledge base." AI that doesn't learn from your organization's actual processes and policies will generate plausible-sounding but organizationally incorrect answers. In regulated industries, this creates compliance risk.

Green Flags to Look For

Built-in human escalation paths that are designed as core architecture, not emergency fallback.

Continuous learning from human corrections that measurably improves AI accuracy over time.

SOP-driven operation where the AI follows your actual documented processes rather than generating responses from generic training data.

Transparent metrics that include outcome measurements (FCR, CES, NPS) alongside efficiency measurements (resolution time, cost per ticket).

Cross-platform integration so the AI can operate across your actual tech stack (Salesforce, Zendesk, Jira, ServiceNow) rather than requiring you to consolidate onto a single platform.

The Lesson Klarna Learned the Hard Way

Klarna's journey — from laying off 700 employees to rehiring them within two years — will be studied in business schools for the next decade. Not because AI failed, but because the implementation philosophy failed.

The AI worked. The chatbot was fast, multilingual, and efficient. But speed without accuracy is just fast failure. Efficiency without empathy is just cheap indifference. And cost savings that erode customer trust aren't savings — they're deferred losses with interest.

Siemiatkowski himself now acknowledges this. His company's "dual-track" approach — AI for scale, humans for quality — is essentially the HITL model that companies like CorePiper have been building from day one. The difference is that CorePiper and similar platforms didn't need a $40 million lesson and a public reversal to arrive at this architecture. They started there.

The future of AI in customer operations isn't about replacing humans. It's about making humans superhuman — giving them AI-powered tools that handle the repetitive work, surface the right context at the right time, and continuously learn from expert human judgment.

That's not a compromise. That's the only approach that actually works at enterprise scale.

What Comes Next

As we move deeper into 2026, the industry is beginning to internalize the Klarna lesson. Gartner now explicitly recommends human-in-the-loop architectures for customer-facing AI deployments. Forrester's latest Wave reports penalize vendors that lack human escalation capabilities. And enterprise buyers are increasingly asking the right question: not "how much can we automate?" but "how do we automate in a way that makes our support better, not just cheaper?"

The companies that get this right — the ones that treat AI as an amplifier for human capability rather than a replacement for human judgment — will define the next era of customer operations.

The ones that follow Klarna's original playbook will learn the same lesson. They'll just pay more for the education.

Ready to Build AI Support That Actually Works?

CorePiper takes a different approach from day one. Our AI agents are built with human-in-the-loop architecture at the core — not as an afterthought. They follow your SOPs, learn from your team's corrections, and seamlessly escalate to human agents when the situation demands empathy, judgment, or expertise.

No 18-month reversal. No rehiring the team you just let go. Just AI that makes your support team faster, more accurate, and more effective from day one.